Recipe for conference talks: add a buzzword like "sharding" and show how mysqlnd helps you with asynchronous queries. Only two PHP database extensions give you asynchronous queries: mysqlnd and Postgres. Slides (OpenOffice) from the International PHP Conference 2008 have the details about the asynchronous query API in ext/mysqli (using mysqlnd).

Asynchronous, non-blocking IO is an old favourite. Although its an old idea, and it is a well known technology that is supported in many environments, it is still new for PHP database extensions. A year ago, I blogged about parallel and asynchronous queries in mysqlnd. Shortly after we had it implemented but never tested it and therefore never pushed it forward. Parallel queries, using a background thread in PHP, did not turn out to fly. But asynchronous queries have some potential.

While writing these lines, I am listening to a talk of David Sorria Parra about sharding. Sharding is an attempt to overcome the limitations of single database servers. Its about horizontal scaling over many machines by splitting your schema and distributing load over many machines. Sharding has a huge number of challenges involved: joins, unions, intersections, grouping, selection on groups, aggregation, primary key, referential integrity, (de-)normalization – plenty of things work different if you split your schema over multiple machines.

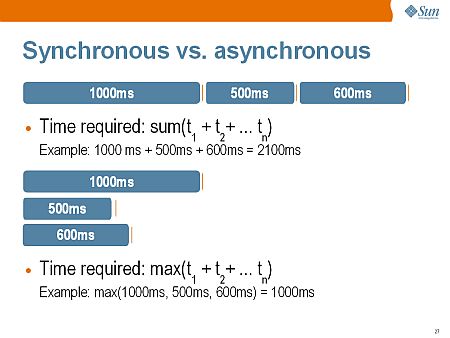

One thing is for sure about sharding: sometimes you will have to query multiple servers to manually compute, for example, an aggregation. Because there is no parallel execution in PHP, you do all the queries sequentially. Query wait times sum up. Not with mysqlnd: send out all the queries, do not wait for the results, do something useful and fetch results when available.

$all_links = array($shard1, $shard2, $shard3);

$processed = 0;

do {

$links = $errors = $reject = array();

foreach ($all_links as $link)

$links[] = $errors[] = $reject[] = $link;

if (0 == ($ready = mysqli_poll($links, $errors, $reject, 1, 0))

continue;

foreach ($links as $k => $link) {

if ($res = mysqli_reap_async_query($link)) {

mysqli_free_result($res);

$processed++;

}

}

} while ($processed < count($all_links));

Judging from the feedback I got during the talk, its time to publish the asynchronous API. Though, we need to cut off some edges. But that is a matter of days or weeks. Its not a matter of months or years. For now, please find more technical details in the slides.

A last quick comment from the conf. After one year of yelling at Andrey, Johannes gave me an experimental mysqlnd branch which allows you to plug-in a PHP Stream filter into the communication between mysqlnd and the MySQL Server. In other words: you could write a transparent proxy and do stuff like query rewriting, caching and so on inside your PHP application. However, that is evil and unstable – let us talk about it next year again…

One Comment

Leave a reply →